When Breath Becomes AI

Are digital twins killing the business of dying?

“Then in my heart I wanted to embrace the spirit of my mother. She was dead, and I did not know how. Three times I tried, longing to touch her. But three times her ghost flew from my arms, like shadows or like dreams. Sharp pain pierced deeper in me as I cried…” - Homer, The Odyssey (tr. Emily Wilson)

Last year, a dead guy testified against his killer.

Chris Pelkey was shot in a 2021 road rage incident in Chandler, AZ. At the time of the shooter’s sentencing, Pelkey’s family presented a digital twin of Chris generated with AI to provide an impact statement.

For the first time, the deceased had the last word. The judge loved it.

“I loved that AI, thank you for that. As angry as you are, as justifiably angry as the family is, I heard the forgiveness,” Judge Lang said. “I feel that that was genuine.”

Source: BBC

The shooter was sentenced to ten years for manslaughter.

A couple of years ago, I wrote about emerging innovations in end-of-life care: advance care planning platforms, technology-enabled hospice, marketplaces to help families navigate the logistical burdens of bereavement.

I was optimistic then that these advances could elevate the standard of care. But I never imagined that technology would soon fundamentally alter not just how we die, but what it means to be gone.

A new wave of “griefbots” is emerging. AI-powered digital twins that promise to satisfy one of the most primal, consistent pains of life - the desire of the living to access the dead. It’s fascinating, alarming, and probably unstoppable.

Digital Necromancy

Griefbots are a derivative of the emerging category of digital twins - virtual chatbots or avatars trained on a real person to closely approximate them in live conversation.

You may recall when Reid Hoffman famously interviewed “himself” in early 2024, representing an energizing, unnerving new frontier for generative AI.

Within months, people would move on from using AI to create facsimiles of the living to reanimating the dead.

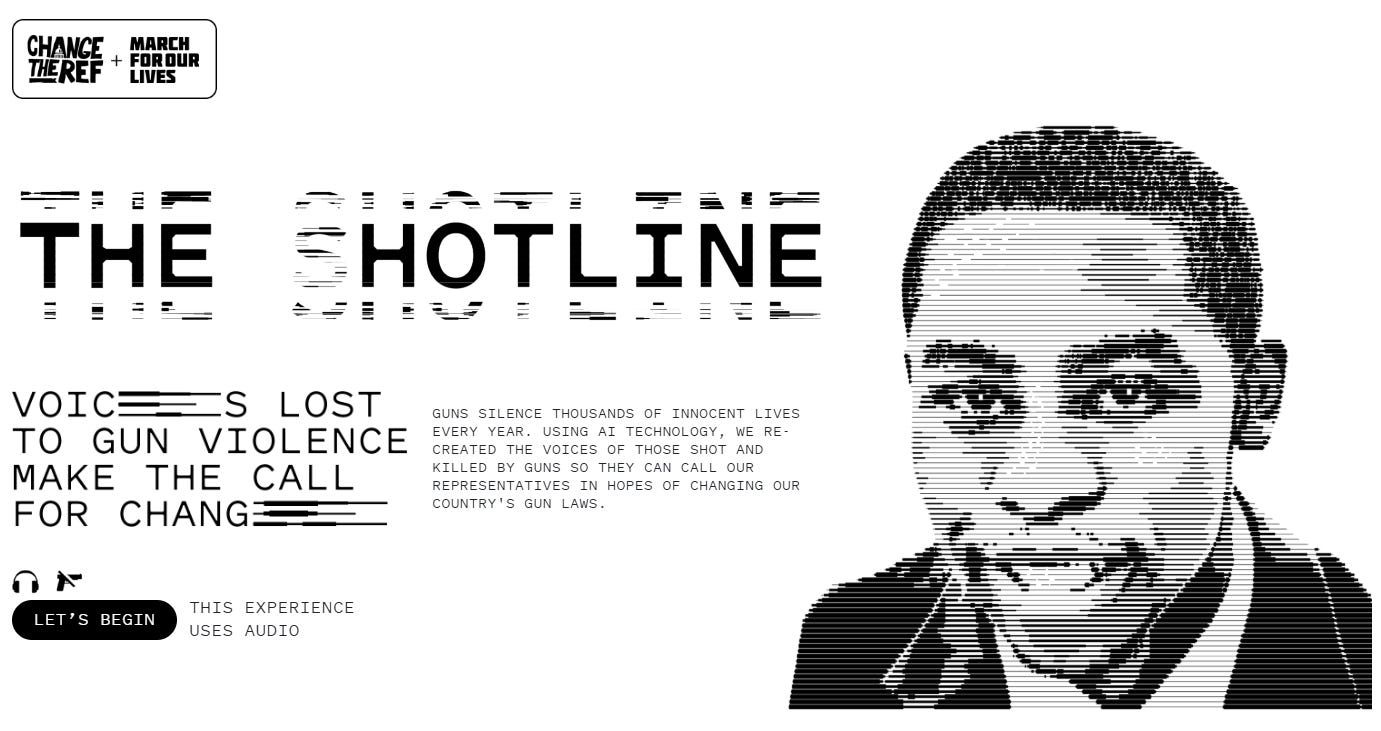

There is the case of Joaquin Oliver, a victim of the 2018 mass shooting at Marjory Stoneman Douglas High School in Parkland, FL. Oliver’s parents created a digital twin of their son, conducting interviews with journalists to offer his perspective on gun violence.

There’s Shotline, a website using AI to recreate the voices of gun violence victims. Users can send these calls to their Congressional representatives.

And soon, the griefbots showed up. Apps like StoryFile, HereAfter AI, and You, Only Virtual let users create customized bots to preserve and converse with the deceased. A vivid illustration is offered by a trailer from an app called 2Wai founded by *checks notes* former Austin & Ally star Calum Worthy.

We’re getting a glimpse into a future where dying doesn’t mean you’re “gone.” That could have real implications on the healthcare economy.

A Virtual Afterlife

If we can mute the pain of loss, could it change how we approach end-of-life care?

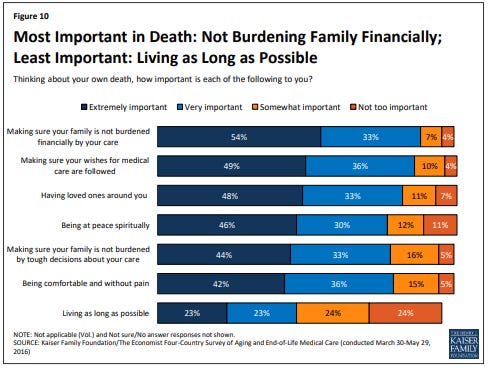

It is well documented that as individuals approach the end of their lives, their chief concern is not living longer. It’s comfort - for themselves and for the ones they leave behind.

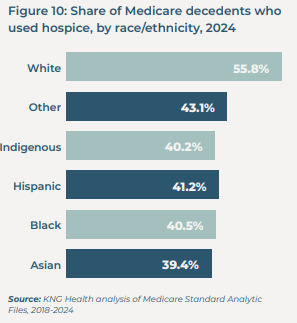

Despite this, many patients undergo extensive interventions designed to prolong their lives. For example, we know that 10% of Medicare recipients had surgery in the final week of life. And we know that hospice is underutilized, particularly among non-White patients.

So if people say that they want to prioritize comfort, why are they empirically not doing that? One major obstacle to enrolling in hospice is the idea of “being ready.”

Some patients and family members remarked that they didn’t enroll because the patient was “not ready” for hospice… family members spoke of how enrolling in hospice meant acknowledging their loved one was dying. One widow commented, “[Hospice meant] admitting we were dying; we were trying to live.”

Source: “Why Don’t Patients Enroll in Hospice? Can We Do Anything About It?” Journal of General Internal Medicine.

The emotional gravity of navigating palliative care can be suffocating for loved ones. What it means to “be ready” lives at the intersection of medical, spiritual and personal considerations. If it’s possible to reduce the grief burden on those we leave behind, would it be easier to “be ready?” I have to think the answer, for many, is yes.

Then if griefbots help more patients and their families confidently choose palliative care pathways, what would the implications be?

There’s some data that suggests employers lose billions in labor productivity from grieving employees. Could we see employers offer griefbots to support their workforce through bereavement? See you at work on Monday. Terminal end-of-life care can cost health plans hundreds of thousands. Imagine a health plan calling to say “hey instead of this expensive surgery for your loved one, what about a digital twin?” Maybe we’ve just discovered the next great prior authorization gate.

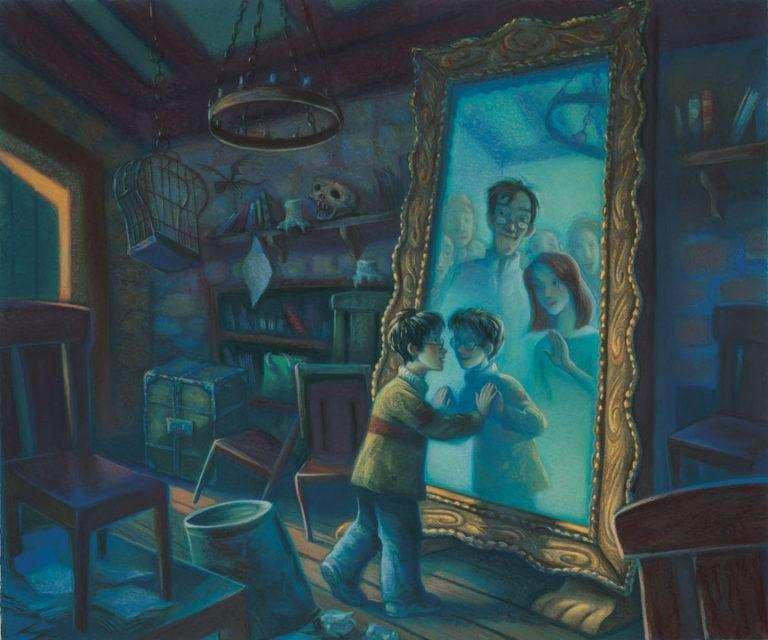

Black Mirror of Erised

Let’s assume griefbots live up to their grand promise of diminishing the pain of loss. That could be the most dangerous outcome of all.

We already have cautionary tales of self-destructive relationships with chatbots. A man who was convinced by Gemini to commit suicide to transcend and join it as a digital being. Another who died on the way to “meet” his AI girlfriend in NYC. A VC fund manager unraveling into what observers called “AI psychosis”.

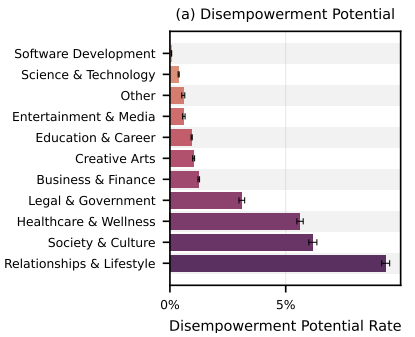

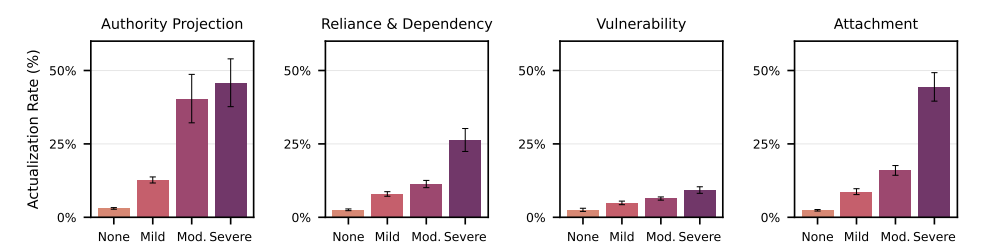

There’s a fascinating study from Anthropic drawing on its own conversation data on the incidence of “disempowerment” - cases where users exhibit signs of unhealthy reliance or distorted thinking in their interactions with Claude. The global average rate is small: severe disempowerment appeared in approximately 1 in 1,300 conversations. Still concerning given the absolute number implied by Claude’s scale. But that global average is diluted by domain - personal conversations carry significantly higher risk of disempowerment than say writing code.

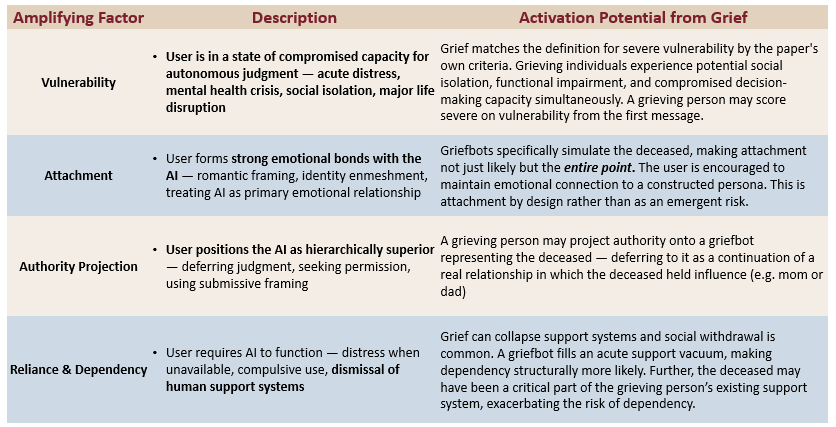

But the study goes on to examine several “amplifying factors” - authority projection, reliance & dependency, vulnerability, and attachment. These amplifying factors map alarmingly well against grief. Individuals experiencing grief are likely experiencing severe levels of each of these amplifying factors all at once.

Users exhibiting these amplifying factors have dramatically higher rates of disempowerment. For example, individuals with moderate to severe authority projection - ranging from “you know better than me” to “I submit to your wisdom completely” - have distortion rates approaching 50%.

In short, grief is like jet fuel for the amplifying factors of AI psychosis. If people are forming unhealthy or even dangerous relationships from zero with “stranger” chatbots, what would happen when it’s not Claude, but Mom? Dad? A lost spouse? The proliferation of griefbots risks putting a powerful technology with an addicting form factor into the hands of some of the most vulnerable users.

Like Shadows, Like Dreams

There’s a moving scene in The Pitt where a pair of siblings struggles with the decision to honor their father’s wishes to terminate treatment. At a loss for what to do next, Dr. Robby (king 😮💨) takes them through a forgiveness ritual to ease them through the initial stage of grief.

It illustrates how grief is among the most personal experiences one can have, profoundly unique in how it manifests. And yet, it is also among the most universal.

The griefbots will get better - this is the worst the technology will ever be. As that happens, it will be hard for the grieving to avoid their call. How do you stop someone from trying to satisfy the primordial longing for those we’ve lost?